A vehicle that understands the passengers it carries, that knows if they feel afraid or unwell, or if they are relaxed. Or angry. Or happy. A vehicle that is able to measure the driver’s level of concentration or stress. It sounds like science fiction, but it’s on its way to becoming a reality.

The Valencia Biomechanics Institute (IBV) is working on a system that understands and interprets human emotions to achieve a safer and more empathic and autonomous car. It does so through the SUaaVE –Supporting acceptance of automated Vehicle—project, with the collaboration of nine European entities, funded by the European Horizon 2020 program.

Autonomous vehicles currently being tested offer level 2 and 3 autonomy. That is to say, they drive themselves, but they can demand our attention in certain circumstances, when the human driver takes over. “Level 4 or 5, which is what our project is about, are vehicles with complete autonomy,” explains José Solaz, Director of Automotive and Mobility Innovation at the IBV. “What we’re trying to do is lay the groundwork for how those future vehicles should behave.”

An empathic vehicle is one that not only looks outwards, but also focuses on its occupants. When we talk about autonomous vehicles, we always think about their efficiency, the route they can take, etc., but we usually forget that they transport people. “And people are not cargo. We are sensitive to accelerations, to turns… That is why we need an empathic vehicle, one that thinks about the occupants,” says Solaz.

HOW IT WORKS

With the help of artificial intelligence combined with classical statistics, the IBV researchers are designing a model that will allow the vehicle to adapt its driving to its occupants.

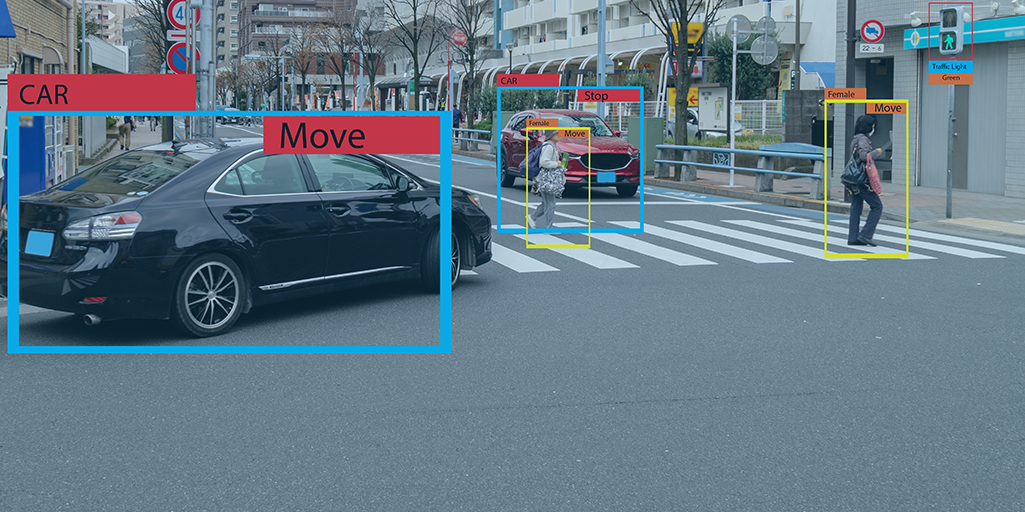

“It is working with a technology that is not fully developed,” clarifies José Solaz. “It looks inside the vehicle using cameras, radars and other sensors. (Today, in the prototype, some sensors still have wires, but in the future they will be wireless.) What the vehicle captures from you are a series of parameters such as where you are looking, if you move your face in a certain way or yawn; it obtains information from your eyebrows, from the muscles around your mouth, your heart rate, your perspiration and respiratory rate. All this provides information about the mood of the occupants. There is a mathematical model behind it that relates all these physical gestures to emotion. We have compiled a basic science that already exists and adapted it to the vehicle environment.”

He continues, “What we have done is make the vehicle capable of deducing what your emotional state is in the face of certain circumstances, which we are provoking through a fairly realistic driving simulator that puts you in potentially risky situations and in others where things are calmer. On the other hand, we have developed a series of algorithms that change the operation of the vehicle (not us directly, but the rest of the partners; it is a consortium project). Those partners are developing an on-board information system that provides different types of information depending on your mood. What each of the partners has done is develop a series of components of that system. It is not yet a real car because level 4 does not exist, but we are developing the parts that it will have.

WHY IS IT IMPORTANT FOR AN AUTONOMOUS VEHICLE TO BE EMPATHIC?

To understand the importance of the system being developed by the IBV, we must look again at the advantages offered by autonomous vehicles.

For the Director of Automotive and Mobility Innovation at the IBV, it is “a wonderful tool” that will help to drastically reduce accidents, that will eliminate the problems of fatigue, drowsiness and night driving faced by drivers today and that will improve and make the transport (of all types of passengers) more flexible, because they are shared vehicles. They will complement public transport on underserved routes.

“It is important that the vehicle knows what the passenger is feeling precisely because of the range of people who are going to ride in this kind of vehicle,” remarks José Solaz. And the system being developed will contribute to reinforcing and increasing people’s confidence in autonomous vehicles, helping us feel safe in them. Thus, this vehicle will share with its occupants everything that is happening and offer information on why it makes the decisions it does; for example, if you have had to take an exit.

“That empathic vehicle, apart from changing its way of operating and driving style, will also report information to passengers about what it is doing and why. In a way, it is going from an autonomous robot to a robot that has knowledge of what is happening inside the vehicle. That is why it is important when it comes to increasing people’s acceptance of a form of transport that has many advantages, but which, without user confidence, will not come into use.”

In this first phase, a total of 50 volunteers participated in the experience of travelling in an empathic autonomous car. The sample was made up of drivers between the ages of 25 and 55, with a balanced distribution of women and men. Future lines of research will focus on optimizing the model through training to obtain high levels of accuracy not only from simulator experiences, but also by monitoring drivers and passengers in real driving conditions.

WHEN WILL FULLY AUTONOMOUS CARS BEGIN OPERATING?

The cars we drive today have level 1 autonomy: they warn you if you change lanes, if you get too close to the car in front, etc. According to José Solaz, it remains to be seen how level 2 and 3 technology can be incorporated.

“The fully autonomous vehicle will probably operate in what are dedicated lanes, specific lanes that already exist for autonomous vehicles. In fact, there are already autonomous vehicles in the subway system. So they will circulate on highly controlled routes, so that little by little the technology is put into operation in low-risk environments.”

“We are on the cusp of seeing vehicles with level 2 and 3 autonomy because the legislation has changed to allow it. We will soon see them on the streets,” he predicts.